An AI-powered learning assistant designed for the learning platform TryHackMe guiding learners through complex cybersecurity content with clarity and confidence. The experience uses contextual nudges, adaptive feedback, and motivational prompts to reduce friction, keep learners on track, and improve engagement without overwhelming them. The goal was simple: make learning feel supported, not solitary.

users reached

months of collaboration

hours of time saved

The process

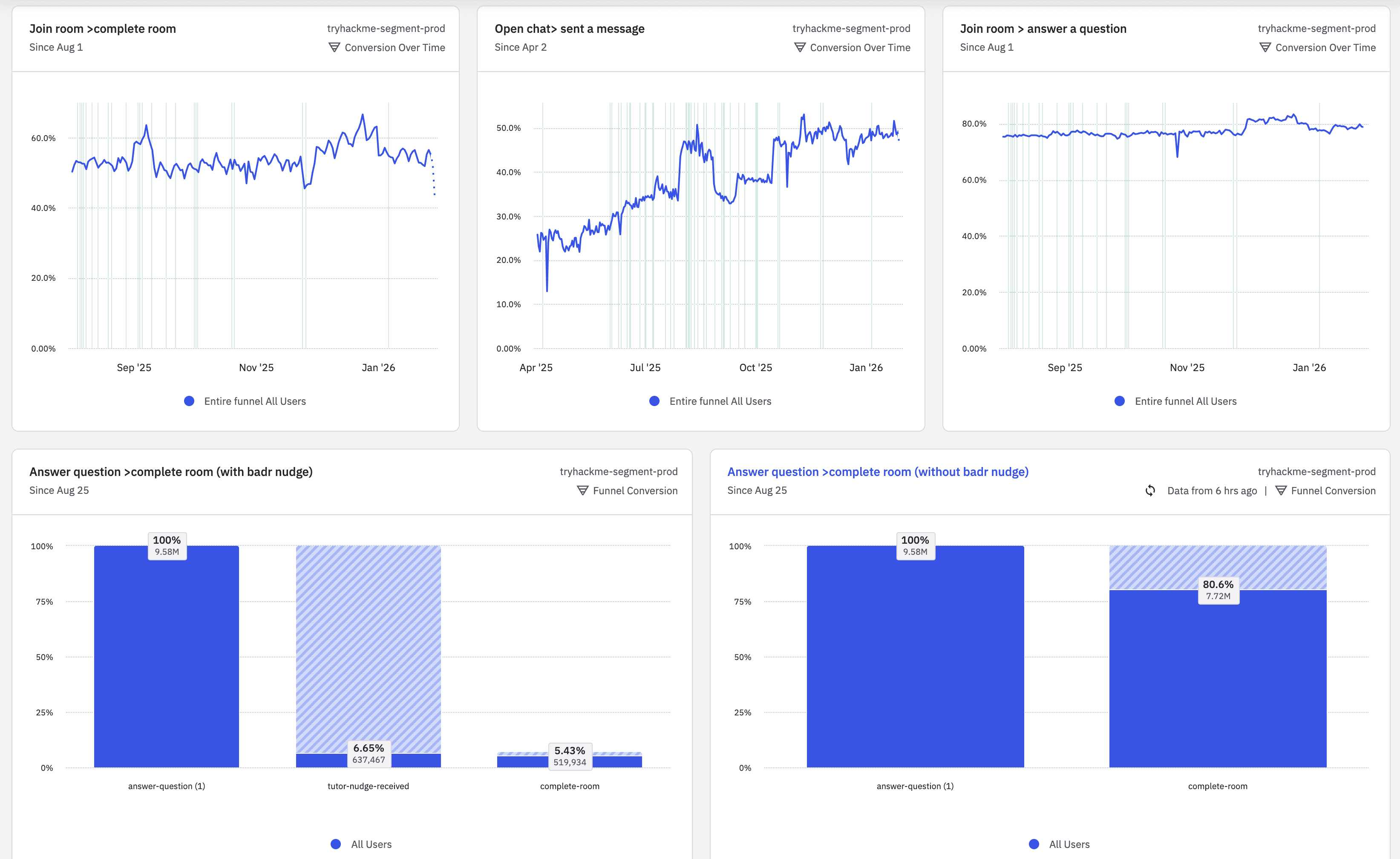

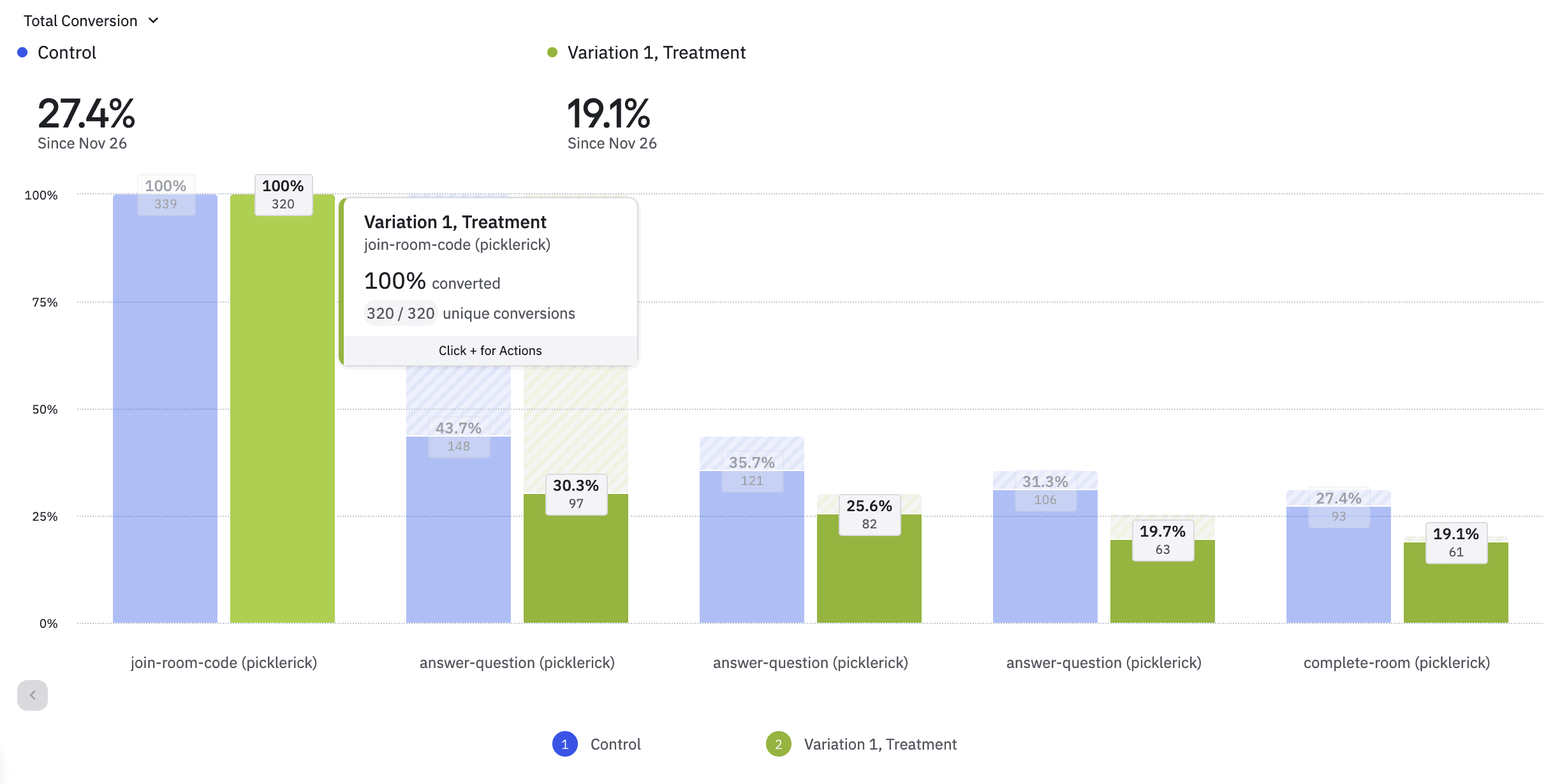

The design process balanced user empathy with data-driven decision-making. We combined behavioral insights, product analytics, and rapid prototyping to design an AI experience that feels natural, helpful, and motivating. Every design decision prioritized clarity, timing, and trust — ensuring the assistant enhanced learning rather than interrupting it.

Performance at scale

Satisfaction

Score

Active users