An AI-powered lab experience designed to guide learners through complex, hands-on cybersecurity scenarios in real time. The agent supports learners as they work inside live environments—offering contextual guidance, hints, and validation—while preserving the challenge and realism of the lab. The goal was to improve confidence, reduce dead ends, and help learners progress without removing the need for critical thinking.

users reached

months of collaboration

hours of time saved

The process

The design process focused on preserving the integrity of hands-on learning while reducing unnecessary friction. By combining behavioral data, scenario analysis, and iterative prototyping, the AI agent was shaped to support learning in real time without undermining problem-solving. Every decision prioritized realism, trust, and learner confidence at scale.

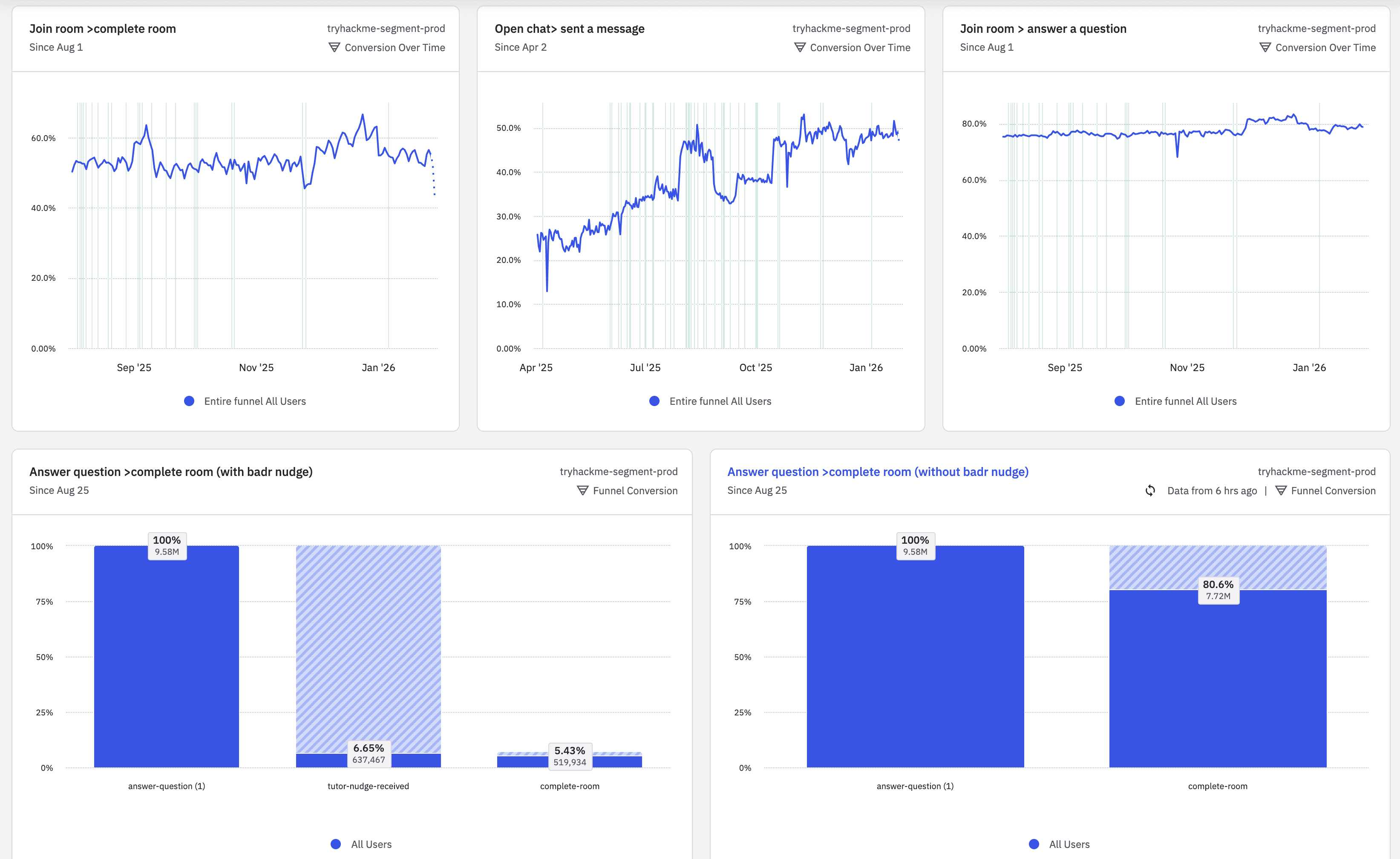

Performance at scale

Satisfaction

Score

Active users